|

Mohamed Sayed, PhD Senior Research Scientist at Niantic Spatial (formerly Niantic Labs)

Hello! I am passionate about 3D reconstruction/understanding/generation and creating robust, interactive vision systems and models for

real-world applications.

|

|

|

Morpheus: Text-Driven 3D Gaussian Splat Shape and Color Stylization (Generative 3D Stylization, Gaussian Splatting, Diffusion Models) Jamie Wynn*, Zawar Qureshi*, Jakub Powierza, Jamie Watson, Mohamed Sayed Computer Vision and Pattern Recognition - CVPR 2025 Project Page, Paper, Code, Video, |

|

MVSAnywhere: Zero-Shot Multi-View Stereo (foundational reconstruction model) Sergio Izquierdo, Mohamed Sayed, Michael Firman, Guillermo Garcia-Hernando, Daniyar Turmukhambetov, Javier Civera, Oisin Mac Aodha, Gabriel Brostow, Jamie Watson Computer Vision and Pattern Recognition - CVPR 2025 Project Page, Paper, Code, Bibtex |

|

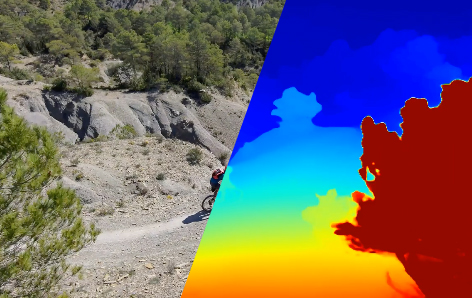

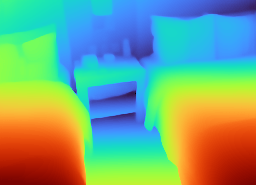

DoubleTake: Geometry Guided Depth Estimation (Flexible, accurate, and fast 3D reconstruction & depth estimation) Mohamed Sayed, Filippo Aleotti, Jamie Watson, Zawar Qureshi, Guillermo Garcia-Hernando, Gabriel Brostow, Sara Vicente, Michael Firman European Conference on Computer Vision - ECCV 2024 Project Page, Paper, Code, Video, Bibtex |

|

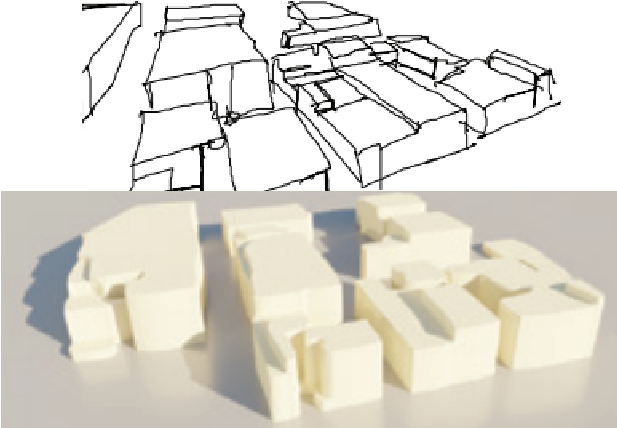

GroundUp: Rapid Sketch-Based 3D City Modeling Gizem Esra Unlu, Mohamed Sayed, Yulia Gryaditskaya, Gabriel Brostow European Conference on Computer Vision - ECCV 2024 Project Page, Paper, Video, Bibtex |

|

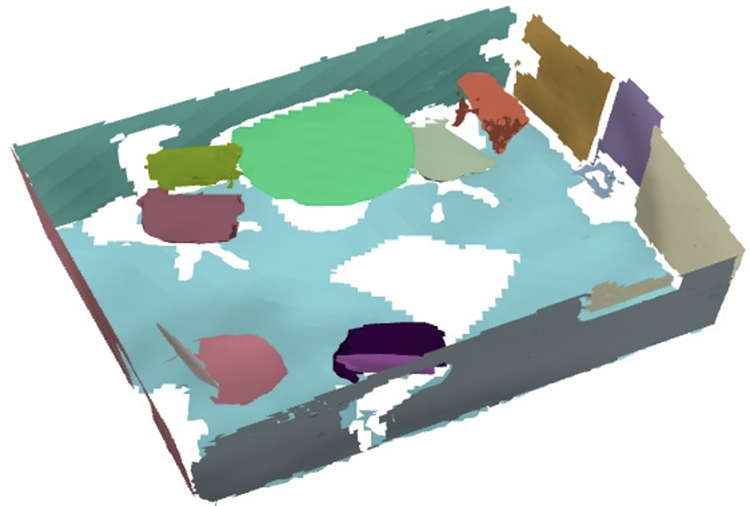

AirPlanes Accurate Plane Estimation via 3D-Consistent Embeddings Jamie Watson, Filippo Aleotti, Mohamed Sayed, Zawar Qureshi, Oisin Mac Aodha, Gabriel Brostow, Michael Firman, Sara Vicente Computer Vision and Pattern Recognition - CVPR 2024 Project Page, Paper, Code, Video, Bibtex |

|

An Empirical Study of the Generalization Ability of Lidar 3D Object Detectors to Unseen Domains George Eskandar, Chongzhe Zhang, Abhishek Kaushik, Karim Guirguis, Mohamed Sayed, Bin Yang Computer Vision and Pattern Recognition - CVPR 2024 Paper |

|

Don’t Look Now: Audio/Haptic Guidance for 3D Scanning of Landmarks Jessica Van Brummelen, Liv Piper Urwin, Oliver James Johnston, Mohamed Sayed, Gabriel Brostow CHI Conference on Human Factors in Computing Systems - CHI 2024 Paper, Video |

|

Virtual Occlusions Through Implicit Depth Jamie Watson, Mohamed Sayed, Zawar Qureshi, Gabriel Brostow, Sara Vicente, Oisin Mac Aodha, Michael Firman Computer Vision and Pattern Recognition - CVPR 2023 Project Page, Paper, Code, Video, Bibtex |

|

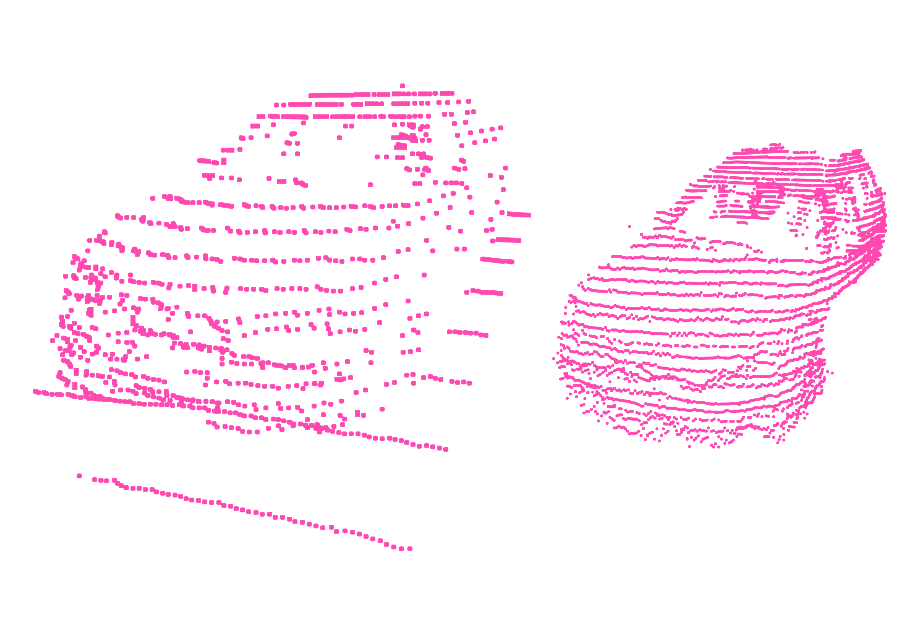

SimpleRecon: 3D Reconstruction Without 3D Convolutions Mohamed Sayed, John Gibson, Jamie Watson, Victor Prisacariu, Michael Firman, Clément Godard European Conference on Computer Vision - ECCV 2022 Project Page, Paper, Code, Video, Bibtex, |

|

LookOut! Interactive Camera Gimbal Controller for Filming Long Takes Mohamed Sayed, Robert Cinca, Enrico Costanza, Gabriel Brostow Transactions on Graphics - ToG 2022, SIGGRAPH 2022 Project Page, Paper, 30s Fast Forward, Video, Filmed Scenes, Bibtex |

|

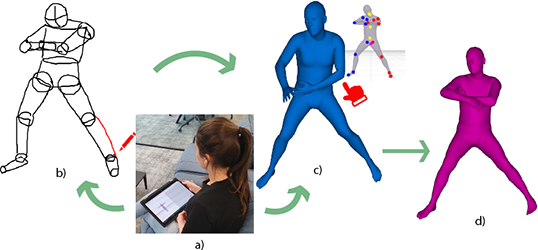

Interactive Sketching of Mannequin Poses Gizem Esra Ünlü, Mohamed Sayed, Gabriel Brostow International Conference on 3D Vision - 3DV 2022 Project Page, Paper, Video, Bibtex |

|

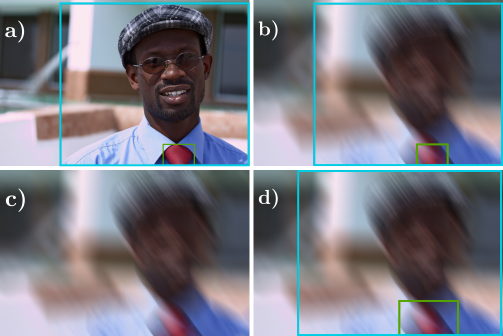

Improved Handling of Motion Blur in Online Object Detection Mohamed Sayed, Gabriel Brostow Computer Vision and Pattern Recognition - CVPR 2021 Project Page, Paper, Video, Code, Bibtex |

|

June 2024 - |

Senior Research Scientist, Niantic Spatial (formerly Niantic Labs) Publications and production on 3D Reconstruction, Depth Estimation, Novel View Synthesis, Generative Diffusion Models, and foundational spatial intelligence. Leading the 3D reconstruction team; directly attracting customers and working on product tech.

|

|

|

April 2023 - June 2024 |

Research Scientist, Niantic, Inc. |

|

|

Aug 2022 - Feb 2023, |

Part-time Researcher, Niantic, Inc. Production work for SimpleRecon |

|

|

April - July 2022, |

Research Intern, Disney Research | Studios Controllable Super Resolution and Diffusion Models |

|

|

May - Dec 2021, |

Research Intern, Niantic, Inc. Depth Estimation and 3D Reconstruction Project - Work accepted at ECCV 2022 and patent pending. |

|

|

Spring 2017, |

Software Intern, Valeo Developed automation software for hardware integration testing. Reduced regression testing time significantly. |

|

|

Summer 2016, |

IT Intern, Mercedes-Benz Egypt Recognized by the CEO for reporting and fixing errors and loopholes in the employee time tracking system. |

|

September 2018 - March 2023 |

PhD in Computer Science, Computer Vision (University College London) Supervised by Prof. Gabriel Brostow. |

|

September 2017 - September 2018 |

MSc Computer Graphics, Vision, and Imaging (University College London) Distinction and Dean's List. Thesis: "Scripted Camera Control Through Visual Tracking." Thesis mark: 91/100. |

|

September 2012 - May 2017 |

Bachelor of Electrical Engineering, Communications & Computer Engineering (Cairo University) Distinction with honors. Thesis: Improving the performance of a widely used Mentor tool with Process Mining. |

|

Reviewer |

Conferences: CVPR ('23, '24, '25, '26), ECCV ('24), ICCV ('23), SIGGRAPH ('23), SIGGRAPH Asia ('23), BMVC ('22). |

|

University College London |

Voted Best Teaching Assistant (TA) in Computer Science (2018/2019) Machine Vision TA - 2018, 2019, 2020 (head) Computer Graphics TA - 2020 Image Processing TA - 2018, 2019 Computational Photography and Capture TA - 2019, 2020 |

|

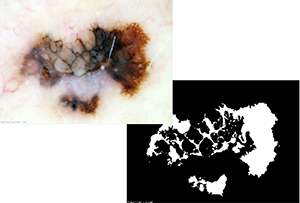

Melanoma Classification (2016) Built a classifier for diagnosing melanoma skin lesions through dermoscopic images. Supervisor: Prof. Tawfik Ismail. |

|

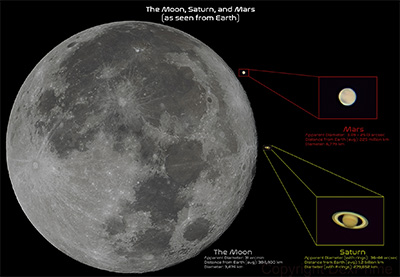

Telescope Guidance System (2016) Amateur astrophotography with an 8-inch Newtonian telescope. Prototyped a telescope guidance system (hardware and software) with an Arduino and the ASCOM platform. Images at astrobin.com/users/DexPrime/. |

|

“YallaCode” Android App (2015) Led a team of four to develop the app to teach children how to build basic computer programs. The app featured a built in “block statement” compiler. |

|

Musical Glove (2013) Built a “Musical Glove” using Arduino that tracks hand movements and alters musical notes on a synthesizer through a virtual MIDI driver. |

|

Languages |

Python, C++, C#, Assembly, MATLAB |

|

Deep Learning Libraries |

Pytorch, Tensorflow |

|

Useful Tools |

COLMAP, Inkscape, Adobe Photoshop, Adobe After Effects, Google Cloud Compute |

|

Hardware |

Arduino, Soldering, 3D Printing, Real-time Control, Serial Communication |

|

Hobbies |

Photography, Cooking, Running, Model Making |